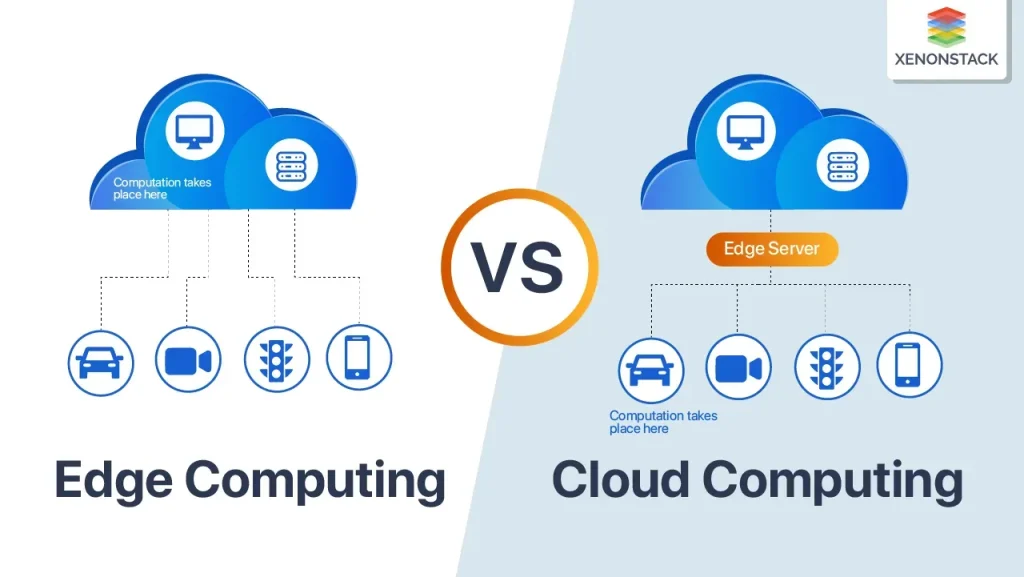

Edge Computing vs Cloud are two pillars of modern technology architecture that organizations rely on today, shaping how data is processed, stored, and delivered to users, and how these designs influence data privacy, compliance, and user experiences. As applications demand lower latency, faster decisions, and resilient operations, deciding where processing happens becomes a strategic choice rather than a simple preference, addressing network bandwidth, developer productivity, and the speed of time-to-market. This introduction outlines the core differences, trade-offs, and practical patterns that help teams design architectures that balance performance, cost, compliance, and risk management across on-premise, edge, and cloud environments, and long-term maintainability across evolving workloads. By framing the discussion around edge computing advantages, cloud computing differences, and the benefits of hybrid cloud and edge integration, you can map workloads to the right layer while emphasizing latency reduction with edge computing and identifying meaningful edge computing use cases, all while considering governance and security implications. This SEO-friendly overview sets the stage for deeper exploration of when to push processing to the edge, when to centralize in the cloud, and how to orchestrate both for optimal outcomes, including guidance on selecting workloads, estimating costs, and planning governance across distributed environments for scalable deployments across distributed sites.

Beyond the phrasing of edge versus cloud, many teams describe a distributed computing approach where processing happens close to devices, often called fog computing or near-edge processing. In this framing, local gateways, micro data centers, and on-site servers take on immediate analytics tasks, while centralized cloud platforms handle long-term storage, governance, and large-scale analytics. This perspective aligns with related concepts such as edge analytics, on-device inference, and policy-driven data management, helping organizations plan scalable architectures that maintain performance even when network connectivity fluctuates. By thinking in terms of proximity, governance, and orchestration, teams can design hybrid implementations that blend local responsiveness with cloud-powered insights.

Edge Computing vs Cloud: Latency, Scale, and Real-World Use Cases

Edge computing advantages show up when milliseconds matter. By moving processing closer to devices and sensors—on gateways, micro data centers, or edge devices—you unlock latency reduction with edge computing that enables near-instant decision making for control systems, industrial automation, and interactive experiences. The reduced data travel also lowers bandwidth consumption, which helps remote or bandwidth-constrained environments keep operations responsive even when connectivity is imperfect.

Cloud computing differences come into play for scale, governance, and analytics. Centralized compute in the cloud supports batch processing, long-term storage, AI model training, and cross-site orchestration across many locations. Yet this centralization can introduce baseline latency for real-time decisions and may demand significant bandwidth to move large data sets. In practice, successful architectures blend the two: using edge for time-sensitive processing and cloud for orchestration, data science, and enterprise analytics—an approach that leverages edge computing use cases with broad reach.

Hybrid Cloud and Edge Integration: Designing for Performance, Governance, and Flexibility

Hybrid cloud and edge integration patterns harness the strengths of both domains. Edge gateways and micro data centers collect and preprocess data, then forward only relevant insights to the cloud for deeper analytics, model training, or centralized dashboards. This arrangement delivers latency reduction with edge computing while preserving cloud-scale capabilities, governance, and security controls. By adopting a shared orchestration layer and containerized services, organizations can push updates to edge devices and coordinate data pipelines consistently across sites.

Practical considerations for performance and compliance emphasize data governance, security, and resilience. In a hybrid setup you define which data stays at the edge, which data moves to the cloud, and how to maintain end-to-end visibility with monitoring and logging. Data sovereignty policies often favor edge processing for sensitive information, while cloud platforms support scalable analytics and governance. With careful planning, hybrid cloud and edge integration enables edge computing advantages on the ground and cloud-scale insights in the boardroom.

Frequently Asked Questions

Edge Computing vs Cloud: What are the primary edge computing advantages relative to cloud computing differences, and how should they influence workload design?

Edge computing advantages include lower latency by processing data near the source, reduced bandwidth, and better resilience when connectivity is intermittent. Cloud computing differences center on elastic scalability, centralized governance, access to advanced analytics, and long-term storage. When designing workloads, place time-sensitive processing at the edge and use cloud resources for heavier analytics and orchestration.

How does hybrid cloud and edge integration enable an effective Edge Computing vs Cloud architecture, and what edge computing use cases illustrate latency reduction with edge computing?

Hybrid cloud and edge integration combines on-site processing with cloud services, enabling real-time decisions at the edge while leveraging cloud resources for model training, data science, and global coordination. Latency reduction with edge computing is a core benefit for edge computing use cases such as industrial automation, autonomous systems, remote monitoring, and smart devices, where milliseconds matter. It also supports on-site data filtering and local analytics before sending insights to the cloud.

| Aspect | Edge Computing | Cloud Computing |

|---|---|---|

| Latency / Response Time | Lower latency via on-site processing; ideal for time-sensitive apps. | Central processing adds some latency but enables complex processing and orchestration. |

| Data Volume / Bandwidth | Data reduction/filtering at the edge; sends only relevant insights. | Handles long-term storage and analytics on aggregated data; supports large-scale processing. |

| Scalability / Elasticity | Resource-limited; predictable performance for localized workloads. | Virtually unlimited compute/storage; easy on-demand scaling. |

| Reliability / Offline Operation | Operates independently of cloud connectivity; resilient in remote locations. | Depends on network connectivity; can be designed with redundancy and offline support. |

| Security & Compliance | Edge devices require robust device security, encryption, and trusted execution; perimeter increases attack surface. | Centralized controls and governance; regional compliance still required; risk concentrated if a region is compromised. |

| Data Sovereignty / Privacy | Keeps sensitive data near origin; supports data sovereignty. | Governed by cloud-region policies; data placement across regions matters for compliance. |

| Cost / Total Cost of Ownership | Upfront hardware, maintenance; can reduce data transfer costs. | Pay-as-you-go compute/storage; ongoing data transfer and analytics costs. |

| Hybrid Integration | Edge gateways process data locally; forward insights to cloud; common orchestration. | Cloud for analytics, model training, and orchestration; global coordination from a central platform. |

| Choosing Where to Run Workloads | Latency-sensitive, privacy, bandwidth constraints favor edge for milliseconds-level decisions. | Large models, data lake processing, and cross-site orchestration favor cloud. |

| Real World Use Cases | Industrial automation, remote monitoring, on-device analytics; autonomy in devices. | Data lakes, AI model training, SaaS, enterprise apps; centralized dashboards. |

Summary

HTML table illustrating key points: Edge Computing vs Cloud—latency, data handling, scalability, reliability, security, cost, hybrid patterns, workload placement, and use cases. The table helps compare how edge and cloud architectures address different requirements.